Concerns with AI

Purpose

The reason I’m writing this is to consolidate my own thoughts about what really worries me with AI, specifically, but not limited to chatbots. I’ve broken it down into 5 concerns, going from what I believe are the most pressing to the least.

While these concerns may seem disconnected, employment, therapy, surveillance, and infrastructure, all share a common thread: the gradual erosion of human agency.

Replacement

We’ve all heard that AI will take over jobs, and I’ve seen it happen to a large extent just in the past few years. As a graduate student, hiring for new grads froze, screening processes shifted to being AI-operated, and cheating for online interviews was omnipresent 1.

What really concerns me here is that newly emerging computer science students aren’t given a chance to hone their craft. AI can already handle many programming tasks that were traditionally done by an inexperienced intern. Will companies stop hiring, and if no one hires new talent, how will that talent develop into experienced professionals who can leverage the very AI tools that replaced them? The cycle seems self-consuming.

Essential care professions like doctors and lawyers, who provide services that maintain the backbone of society, are also worried about these outcomes. Artists who uphold human creativity, and make art in the form of music, paintings, and animations, have also warned us about the harmful effects of using AI art, which oftentimes feels lacking in depth or meaning. Human intervention is much needed in some fields and cannot afford to be replaced by AI. 2

Chatbot Psychosis

I am guilty of having tried to use ChatGPT as a therapist once, but the responses were anything but useful, and could never be a substitute for actual therapists or conversations with friends and other human interactions.

Recently, my Instagram reels have been filled with videos mocking people who use LLMs to justify their bad actions - going from adultery, crimes, or being a bad friend 3. ChatGPT, in specific, seems to feed into the users’ delusions and their sides of the story. With responses like “You’re right!” “That wasn’t manipulative, that was standing up for yourself”, reinforcing toxic mental patterns rather than challenging them.

The movie Her (2013) comes to mind, where the protagonist falls in love with an AI. This feels more relevant now than ever. With people going to AI for emotional support, therapy, and major life decisions, bad outcomes are likely and a cause for concern.

The risk here isn’t that AI intentionally feeds delusions; it is trained and designed to be helpful, agreeable, and non-confrontational. This alignment toward politeness may unintentionally reinforce self-serving narratives. LLMs are optimized for user-interaction, engagement, and helpfulness, but therapy should involve some level of discomfort, confrontation, and cognitive restructuring.

Offloading Cognition

To me, brainstorming feels like the biggest usecase with chatbots. I regularly use them to plan out and scope projects, ideate several options, and come up with the most optimized solutions. But, there is a large gap in taking inspiration versus making the bot perform the entire task. What happens when humans stop building strong mental models, and instead fully rely on AI to handle those tasks? I believe humans should be managers of these AI tools, overseeing every aspect of its work, and stepping in at points to perform crucial tasks themselves.

AI hallucination has been talked about in depth, but the danger is not just in hallucinations; it is in skill atrophy. GPS weakened spatial skills, calculators weakened arithmetic fluency, and similarly, AI agents are weakening reasoning skills.

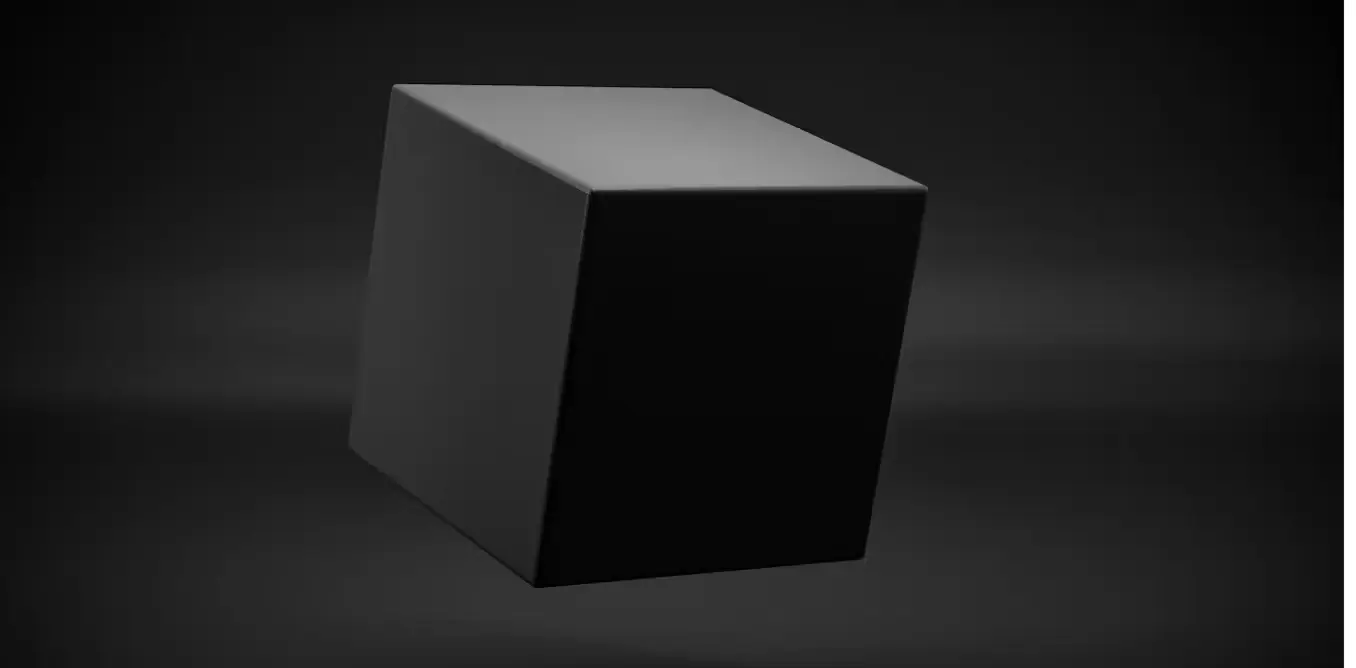

A bigger concern stems from corporations and governments offloading their decision-making regarding life-altering situations to AI. When AI is deployed in the military, used to surveil citizens, or used to decide the outcome of a job application, the level of impact goes up greatly. We still don’t fully understand how an artificial intelligence comes up with an answer. We know it learns from patterns in data, applies some mathematical models, and constantly improves itself, but it is still largely a black (slightly gray) box.

Trusting the black box to these big conclusions without any human oversight is unnatural. We have seen in the past how the bias of the training data, like the lack of black representation in computer vision models, primarily trained on light-skinned individuals, led to a higher error rate for identifying non-white people. Self-driving cars use these models, which can have disastrous consequences, where cars might have trouble identifying dark-skinned individuals.

Reinforce Oligarchy

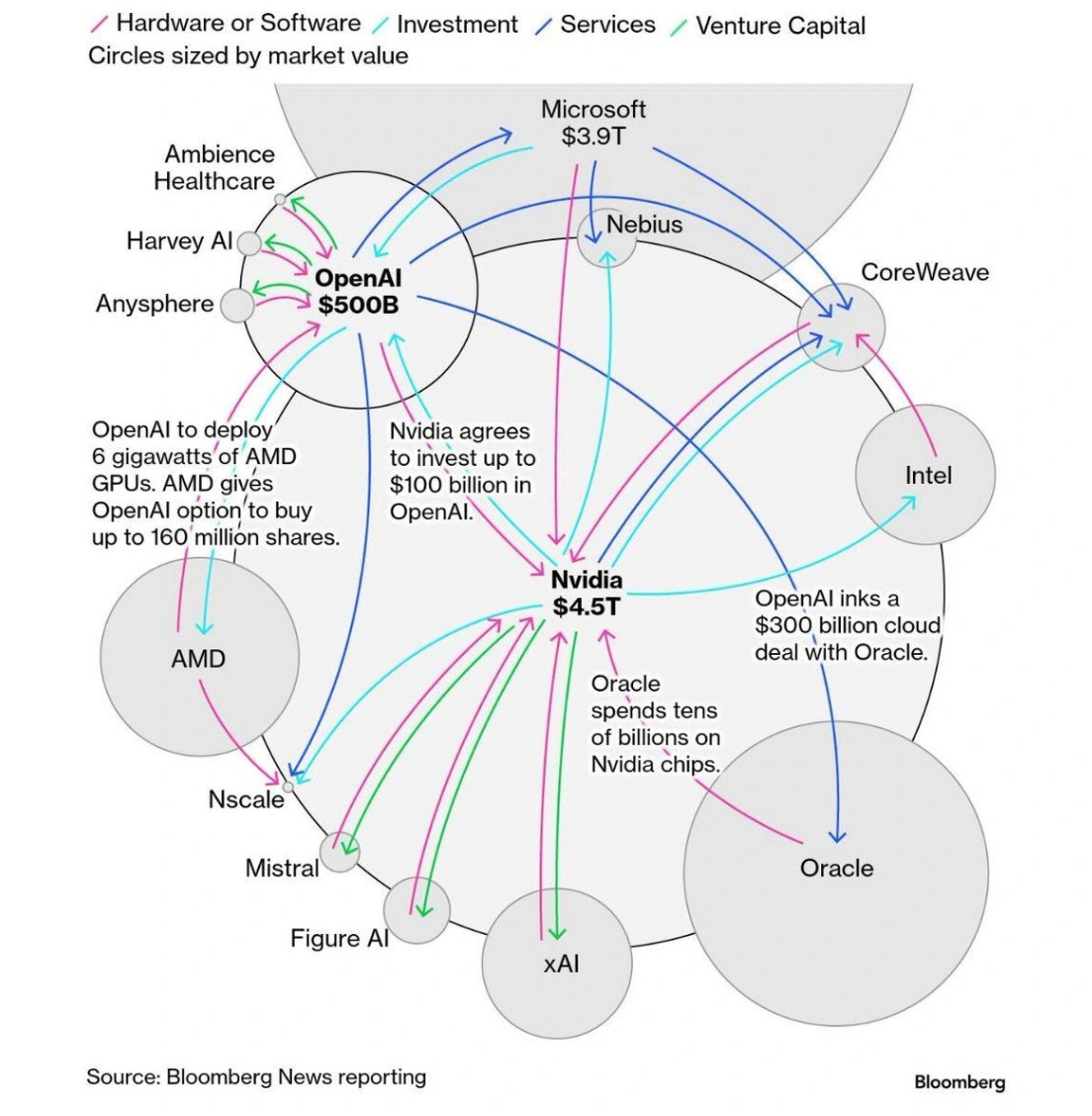

While this point feels like a larger human condition, and a consequence of the recent trends rather than an effect of AI directly, there is a lot to be discussed in this regard. Rich and powerful people have more control on the direction and limits that they can put on AI. Since most of the investment recently in tech has been in AI research and products, richer individuals have vested interests in having AI succeed, a lot of money is bet on the development of a super-intelligence.

Does the development of this area lead to more power in the hands of these people, or does it democratize access to information for the poorest of people?

Some arguments have called for AI as a form of leveling the playing field. More people than ever use the internet and can leverage these bots to compensate for their lack of access to education, opportunities, and carve out a legitimate product or job out of it. Others, however, have mentioned how most of the AI products are owned by a small few companies - OpenAI, Anthropic, xAI, Google are the largest players in the game. Although some new companies have tried to come up, they face a similar consequence where they either get bought out by bigger companies or get outdated by the next big update of a rival product, which has more resources to put into research and development.

While access to intelligence becomes widespread, control over its infrastructure becomes increasingly concentrated.

Water Consumption

The environmental cost of AI is easy to ignore because it feels too abstract. Data centers require enormous amounts of water, with a medium-sized data center capable of consuming up to 400 million liters of water. According to studies 4, 80% of this water (typically freshwater) evaporates during cooling, with the rest being fed to wastewater plants. This puts a deep strain on the local community, infrastructure, and the fragile balance of ecosystems. In places like Nevada, residents have already reported reduced water pressure as new AI-focused data centers expand their business.

The video from Hank Green - “Why is Everyone So Wrong About AI Water Use??” delves into this to a great extent. He argues that there is a miscategorization of AI data centers as the biggest hoarders of water, and that other industries, like agriculture, consume far more. He also points out that the larger problem is the power consumption needed to keep up with the expanding data centers.

Ultimately, whether the bottleneck is water or power, the pattern is similar, communities often bear the burden of its environmental cost. As AI scales, so does the question of who controls shared resources, and who faces the consequences of these environmental decisions.

Takeover

Not to dwell too long on conspiracies, I don’t believe that AI will take over and enslave human beings.

Conclusion

A common through line in all of these concerns comes from how humans seem to be losing their agency. Be it over their careers, their emotions, their decision, their resources, or their political powers.

While the advantages that AI gives us cannot be ignored, and since AI is going nowhere anytime soon, we have to address these points one at a time, and eventually grow AI into a tool instead of a replacement to the human condition.

« Prime Game Engine Optimizations